This article is the first in a series that will explore how click risk, traffic quality, and the decisions made around them shape campaign outcomes for advertisers.

A high-performance engine depends on quality fuel. Put the wrong fuel in, and it degrades, clogs, and slows, and so does everything it powers. The same is true for your digital advertising optimization engine.

Traffic quality has long been treated as a cost of doing business. Anti-fraud and measurement companies have helped change the tide, shining light into the shadows. But invalid traffic still infiltrates campaigns, and advertisers have largely left it to the ecosystem to sort out.

Traffic quality isn’t just a wasted spend problem. It’s a performance problem. When your optimization models can’t tell the difference between a real user and a bot, an engaged shopper and someone who clicked because they were paid to, they learn from whatever shows up. Bidding strategy starts optimizing toward the wrong audience. Spend flows toward placements that look productive but aren’t. Performance plateaus in ways that don’t trace back to any obvious cause. You can optimize everything else perfectly and still not get there.

All roads lead to your data

The sources of that risk may take different forms, but they all have the same effect. Bots and scrapers generate engagement that was never real. Incentivized traffic comes from real people with no real intent, users who clicked because something rewarded them for it, not because your product meant anything to them. AI crawlers aren’t trying to defraud anyone, but they land on pages, trigger events, and leave signals behind that look like behavior. Some of them don’t even announce themselves. And their volume is growing. In 2025, automated traffic grew eight times faster than human traffic. The data your optimization models are learning from is increasingly non-human, and that gap is widening.

When it isn’t filtered out before reaching your data, it arrives on the page, generates sessions, clicks, and conversion events, and those flow into your measurement and optimization stack the same way legitimate traffic does. Smart bidding platforms ingest them. Audience models are shaped by them. Attribution reports count them. By the time any of it surfaces in a dashboard, it has already influenced decisions.

How invalid traffic clogs your optimization engine

The campaign probably looks like it’s working. Spend is flowing, the optimization engine has learned from weeks of data, but the conversions aren’t following. There’s no obvious culprit in the dashboard, as the engine only learns from what it was fed. If enough of those signals came from traffic that was never going to convert, it didn’t learn to find your best customers. It learned to find more of the same. And by the time that becomes apparent, the damage has already been compounding for weeks.

Exclusion lists, bid adjustments, and audience refinements are all valid optimization levers. But they only work if the data driving them is clean. When it isn’t, you’re not optimizing. You’re just making confident decisions on a compromised foundation.

Where good enough stops being good enough

The place to address that foundation is the page. It’s at the page that advertisers can take greater control over this and change the outcome. From the moment someone clicks an ad and arrives on the page, that’s where the advertiser’s data gets made, and it’s the earliest point at which they can see what actually showed up. Pre-bid tools make decisions before traffic arrives. Post-bid measurement looks back at what happened. Neither operates at the moment traffic lands and starts generating signals.

That moment, between the click and the page load, is where the visit enters the data. Most tools focused on this space are measuring the ad or the environment it runs in, not the traffic itself. And where page-level measurement does exist, it tends to catch the obvious offenders. The sophisticated stuff, incentivized traffic that behaves like a real user, AI crawlers that look like engaged visitors, the harder-to-classify sessions that sit in the gray zone, is where the picture gets blurry. Knowing what’s actually in that gray zone is what makes the difference between optimization decisions you can defend and ones you can’t.

HUMAN’s analysis of more than one quadrillion interactions puts a number on that gray zone: only half a percentage point separates the rate of benign automation from malicious automation. At that margin, the gray zone isn’t an edge case. It’s the whole problem.

Closing the visibility gap with Page Intelligence

But what if you could see exactly what was arriving on your pages? Which sessions represented real intent, which were bots, which were incentivized clicks that were never going to convert? That visibility changes what’s possible, not just for fraud prevention, but for every optimization decision that follows.

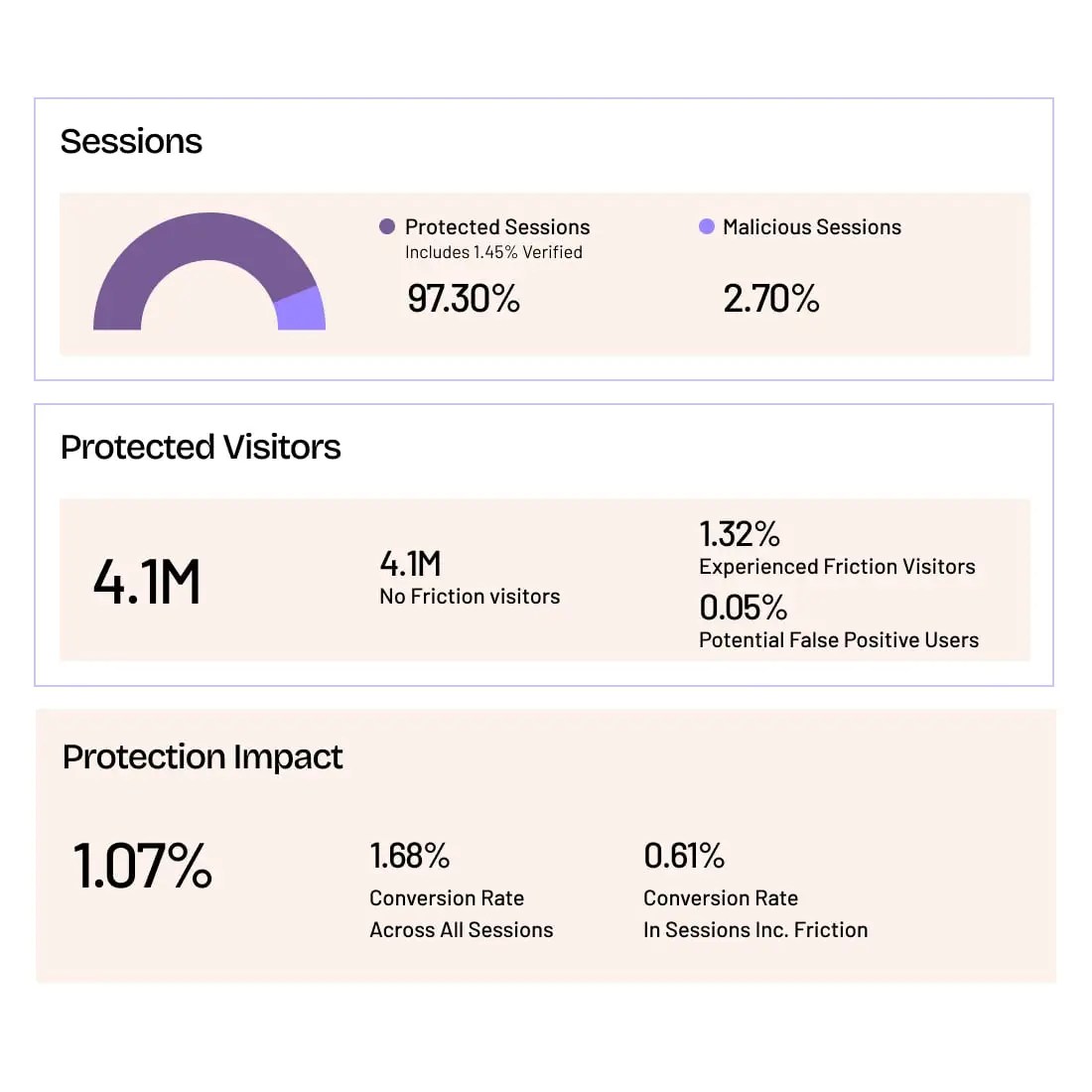

HUMAN’s Page Intelligence delivers a trust layer at the page level that gives advertisers direct visibility into what’s actually arriving on their pages. It renders before the page loads, classifying the full spectrum of traffic risk in real time, from standard invalid traffic and bots to incentivized clicks and AI crawlers. The result is a cleaner picture of who is actually there, one that separates the real customers worth optimizing toward from the traffic that was never going to convert. Audience segments reflect genuine behavior. Bid strategy learns from signals that meant something. Performance metrics become something you can trust, and the foundation your campaigns are built on gets stronger with every cycle.

Performance runs on quality traffic

Traffic quality and campaign performance have always been connected. The industry has just treated them as separate problems, addressed in separate conversations, with separate tools. But the data doesn’t respect that division. What arrives on the page shapes what the optimization engine learns, and what the optimization engine learns shapes where the budget goes next. Closing that gap is a performance decision as much as anything else, and for most advertisers it’s one that’s overdue.

To learn more about how Page Intelligence can give you the visibility you need to truly trust your traffic and the performance results that follow, request a demo today.

Grow with Confidence

HUMAN Sightline protects every touchpoint in your customer journey. Stop bots and abuse while keeping real users flowing through without added friction.